TL;DR – code is here on GitHub

A customer I’ve been talking to recently asked me to work together to create a reference implementation (more like a proof of concept) for the next generation the backend for a game they currently have. In short, they needed a platform that would accommodate these needs and specifications:

- accept incoming messages from game servers

- incoming message rate would be a couple of hundred messages per minute

- we need to store game session related events, so each piece of data is relevant to a specific game session that can run for minutes (20′-30′)

- scalability and high availability (of course)

- data is needed to be displayed in real-time (e.g. live leaderboards)

- data is needed to be stored for later analysis (e.g. best players of the week)

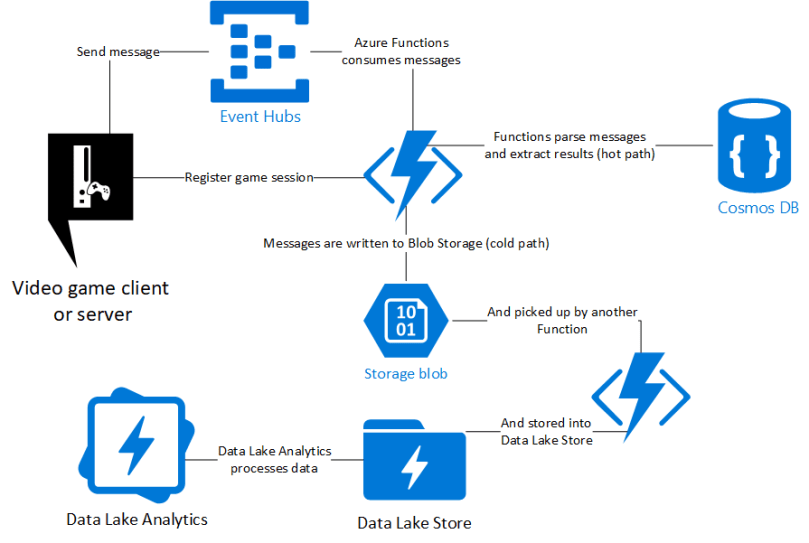

This is a perfect example of a problem can be solved with a solution based on the lambda architecture pattern. After some research and some experimentation, we ended up using the following Azure services:

- Event Hubs that will be used as the ingestion mechanism for the data

- Functions using Consumption Plan to save us from worrying about scalability

- Cosmos DB and its Time To Live functionality for the real time data (“hot” path)

- Data Lake Store will be used to store the data for later analysis (“cold” path)

- Data Lake Analytics and its U-SQL query language to analyze the data

- Code on the Functions is written using Node.js

This is by no circumstances the only (or even the best!) solution (e.g. one could also use Databricks, Stream Analytics or HDInsight), however, for our purposes it worked quite well.

Feel free to check out the code at the GitHub repository here: https://github.com/dgkanatsios/GameAnalyticsEventHubFunctionsCosmosDatalake